TL;DR

To estimate the product ship date, the Weibull reliability model could be used. Why this will not work is explained in this post. The post is aligned with the Black Box Software Testing Foundations course (BBST) designed by Rebecca Fiedler, Cem Kaner, and James Bach.

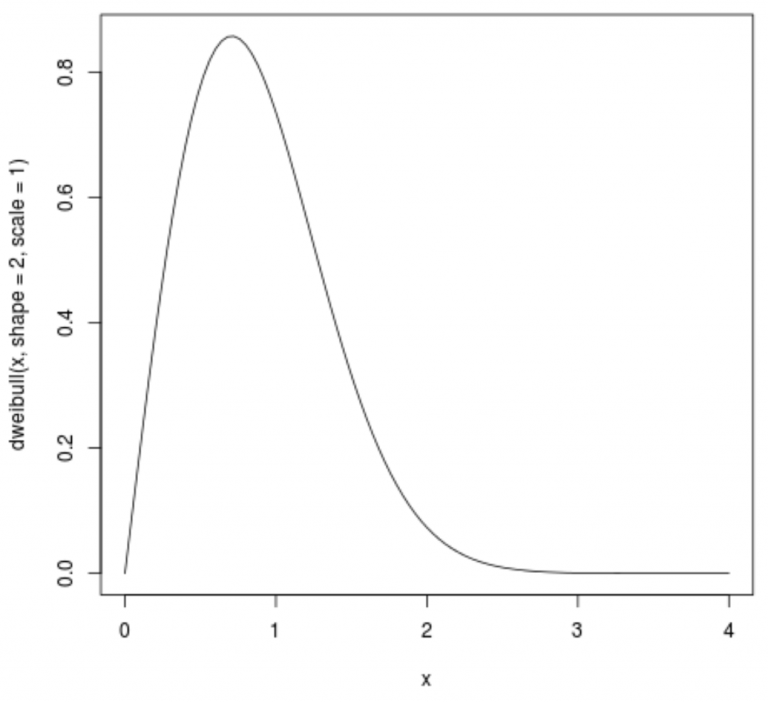

In the Weibull model, Y-axis represents time, while X-axis is the probability of occurrence. To fit this into the product ship estimation date, we need to estimate the parameters of the Weibull curve. Some projects assume that the defect arrival rate is suitable for this purpose. We could measure a number of open bugs per week or ratio of fixed/found bugs per week.

Implausible Assumptions

When we create a model of something, we have assumptions. Using bug counts for ship date estimation is using the following set of assumptions [Simmons]:

- We test as users that will use the application

- All defects have the same probability of occurrence

- Defect fix is immediate, without the introduction of new defects

- All defects are independent

- Number of defects is finite

- Defects arrival rate follows the Weibull curve

- Timeboxed testing activities are independent.

If you have some testing experience, then you know that those assumptions are not plausible.

The main feature of the Weibull curve is that it first rises then falls. Bug count rates will follow the Weibull curve. The problem is that using bug counts to estimate the shipping date is a surrogate measure where bug count rates are not related to the shipping date. Here is why.

We should have an early curve pick because that implies an earlier bug rate decline and sooner shipping date. In reality, the project team (not only testers!) will start adopting dysfunctional methods to achieve the Weibull curve for bug arrival rate [Hoffman].

The goal is to find a lot of bugs early. Testers would hunt for easy functional bugs, not hard to find the bug. Other testing techniques would not be used (product environment, security, scenario testing, risk analysis are some of them).

To have fewer bugs later in the project, testers would do:

- regression testing

- bugs not put in the tracking system

- avoid hunt for hard bugs

- mark found bugs as duplicates

- developers stop testing

- reject bugs

Remember

People optimize what we measure them against, at the expense of what we do not measure.